The standard InfoNCE loss used in contrastive learning (e.g., SimCLR) is:

$$\mathcal{L}_i^{(k)} = -\log \frac{\exp(\langle \mathbf{z}_i^{(1)}, \mathbf{z}_i^{(2)} \rangle / \tau)}{\exp(\langle \mathbf{z}_i^{(1)}, \mathbf{z}_i^{(2)} \rangle / \tau) + U_{i,k}} \tag{1}$$

where $U_{i,k}$ denotes the summation of negative terms for the view $k$ of sample $i$:

$$U_{i,k} = \sum_{l \in \{1,2\},\; j \in [\![1,N]\!],\; j \neq i} \exp(\langle \mathbf{z}_i^{(k)}, \mathbf{z}_j^{(l)} \rangle / \tau) \tag{2}$$

and $\tau$ is the temperature parameter.

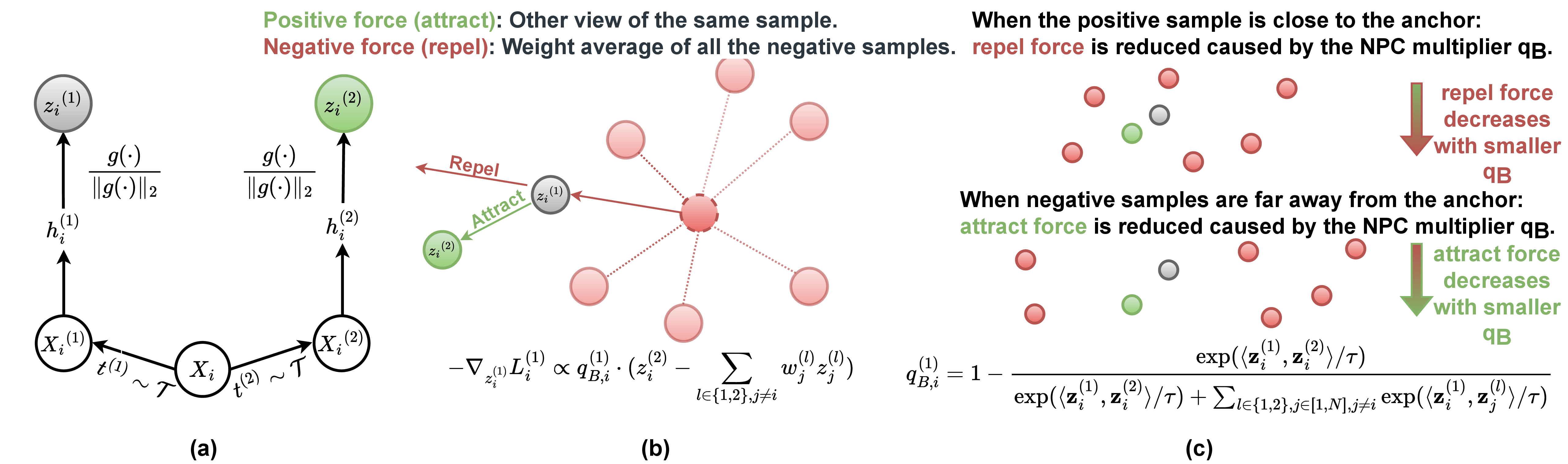

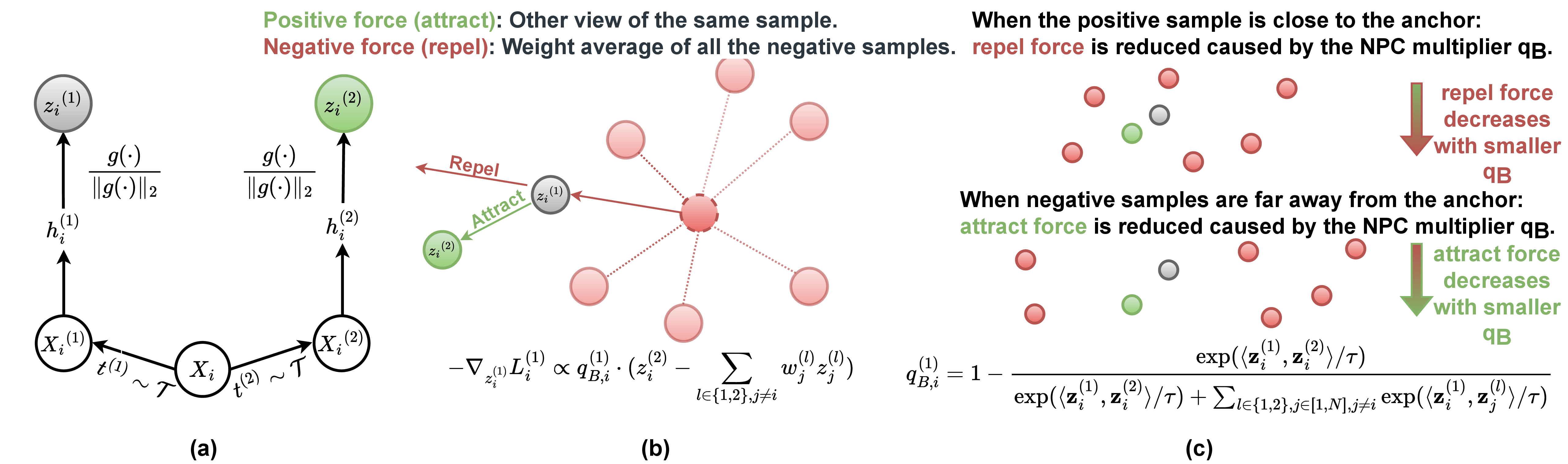

Proposition 1. There exists a Negative-Positive Coupling (NPC) multiplier $q_{B,i}^{(1)}$ in the gradient of $\mathcal{L}_i^{(1)}$:

$$\left\{\begin{array}{l}

-\nabla_{\mathbf{z}_{i}^{(1)}}\mathcal{L}_{i}^{(1)} = \frac{q_{B,i}^{(1)}}{\tau}

\left( \mathbf{z}_i^{(2)} - \sum_{l \in \{1,2\},\; j \neq i}{\frac{\exp\langle \mathbf{z}_i^{(1)},\mathbf{z}_j^{(l)} \rangle/\tau}{U_{i,1}}}\cdot \mathbf{z}_j^{(l)}\right) \\[8pt]

-\nabla_{\mathbf{z}_{i}^{(2)}}\mathcal{L}_{i}^{(1)} = \frac{q_{B,i}^{(1)}}{\tau}\cdot \mathbf{z}_i^{(1)}\\[8pt]

-\nabla_{\mathbf{z}_{j}^{(l)}}\mathcal{L}_{i}^{(1)} = - \frac{q_{B,i}^{(1)}}{\tau}\frac{\exp\langle \mathbf{z}_i^{(1)},\mathbf{z}_j^{(l)} \rangle/\tau}{U_{i,1}}\cdot \mathbf{z}_i^{(1)}

\end{array} \right. \tag{3}$$

where the NPC multiplier $q_{B,i}^{(1)}$ is:

$$q_{B,i}^{(1)} = 1 - \frac{\exp(\langle \mathbf{z}_i^{(1)}, \mathbf{z}_i^{(2)} \rangle / \tau)}{\exp(\langle \mathbf{z}_i^{(1)}, \mathbf{z}_i^{(2)} \rangle / \tau) + U_{i,1}} \tag{4}$$

Due to symmetry, a similar NPC multiplier $q_{B,i}^{(k)}$ exists in the gradient of $\mathcal{L}_i^{(k)}$, $k \in \{1,2\}$, $i \in [\![1,N]\!]$.

All partial gradients in Eq. (3) are modulated by the common NPC multiplier. This coupling is problematic because:

- When a positive sample is close (easy positive), the gradient from informative negatives gets suppressed.

- When negative samples are far (easy negatives), the gradient from the informative positive is reduced.

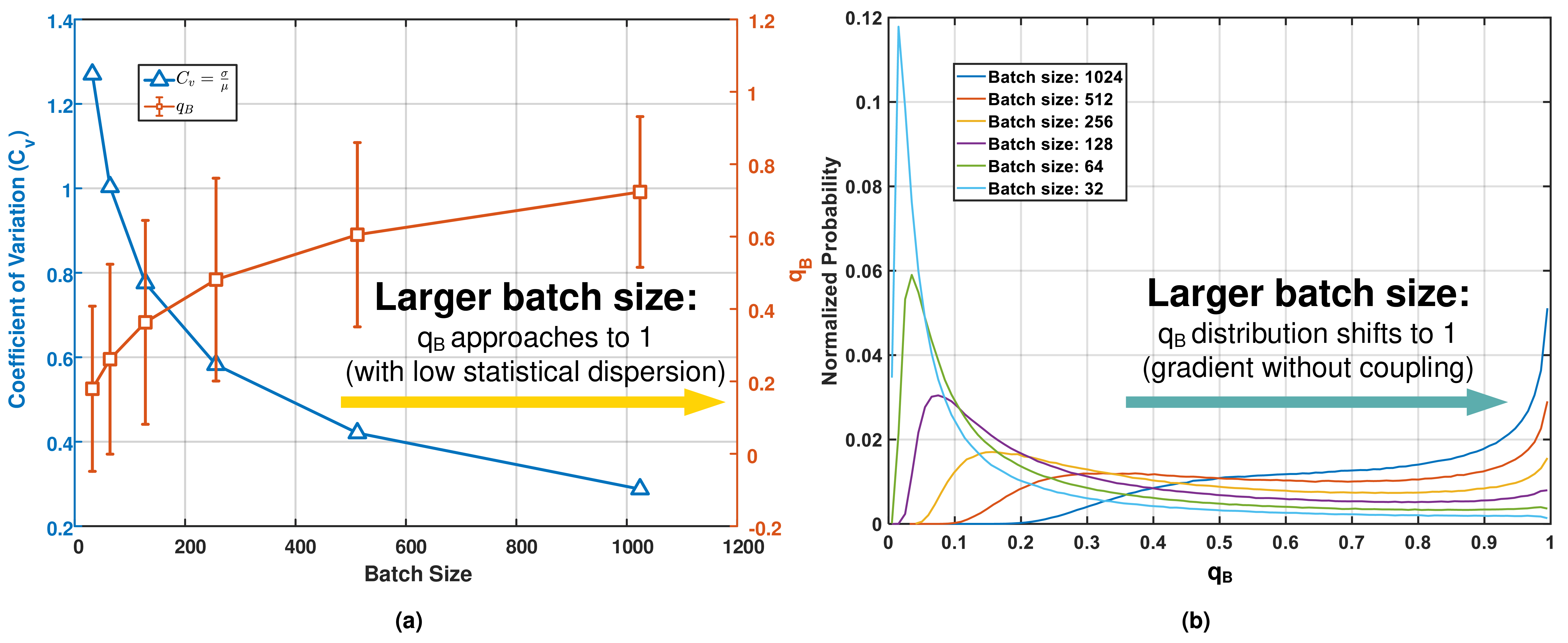

- With smaller batch sizes, the classification task becomes simpler, causing $q_B$ to cluster near 0 and drastically reducing learning efficiency.

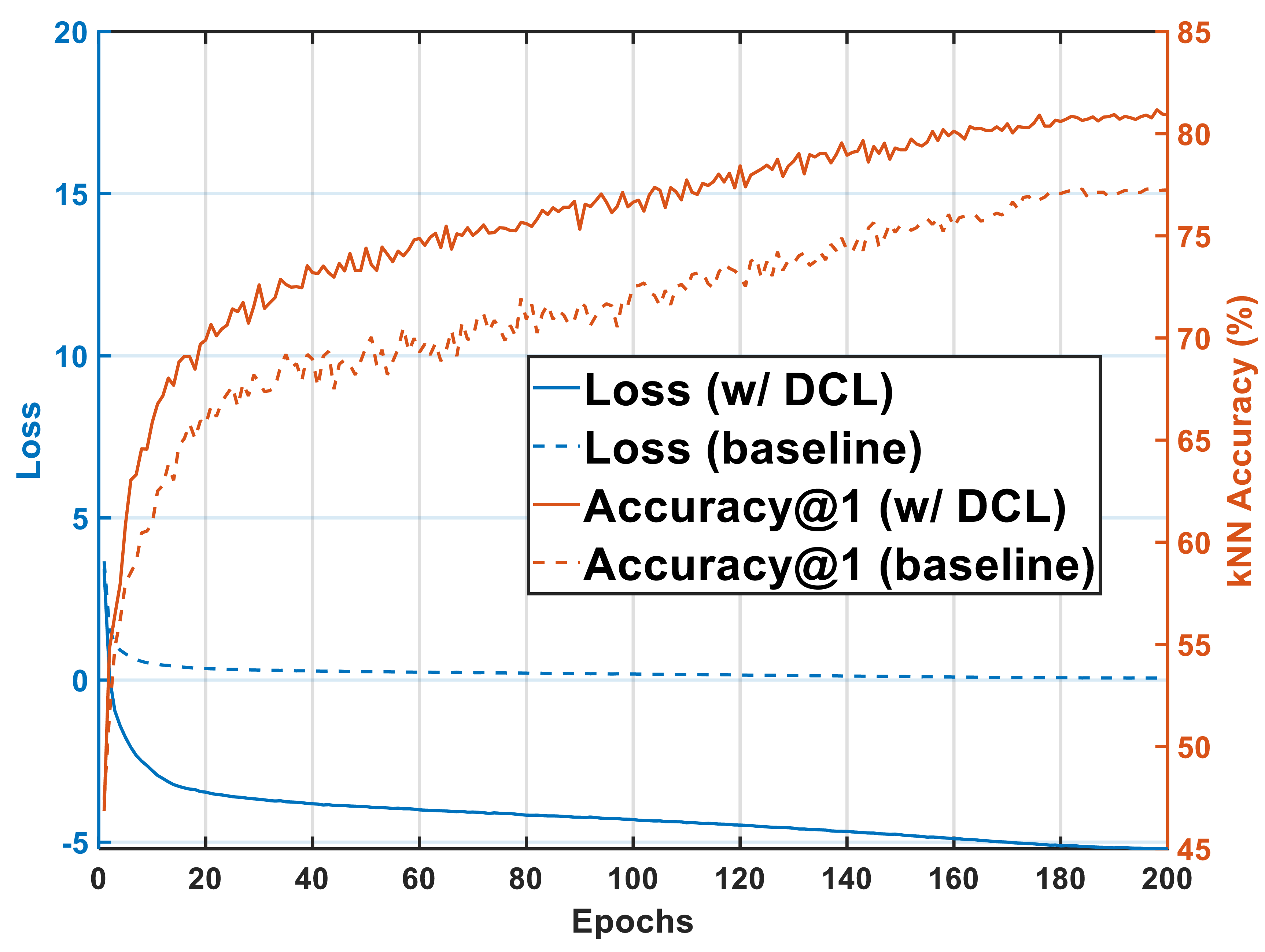

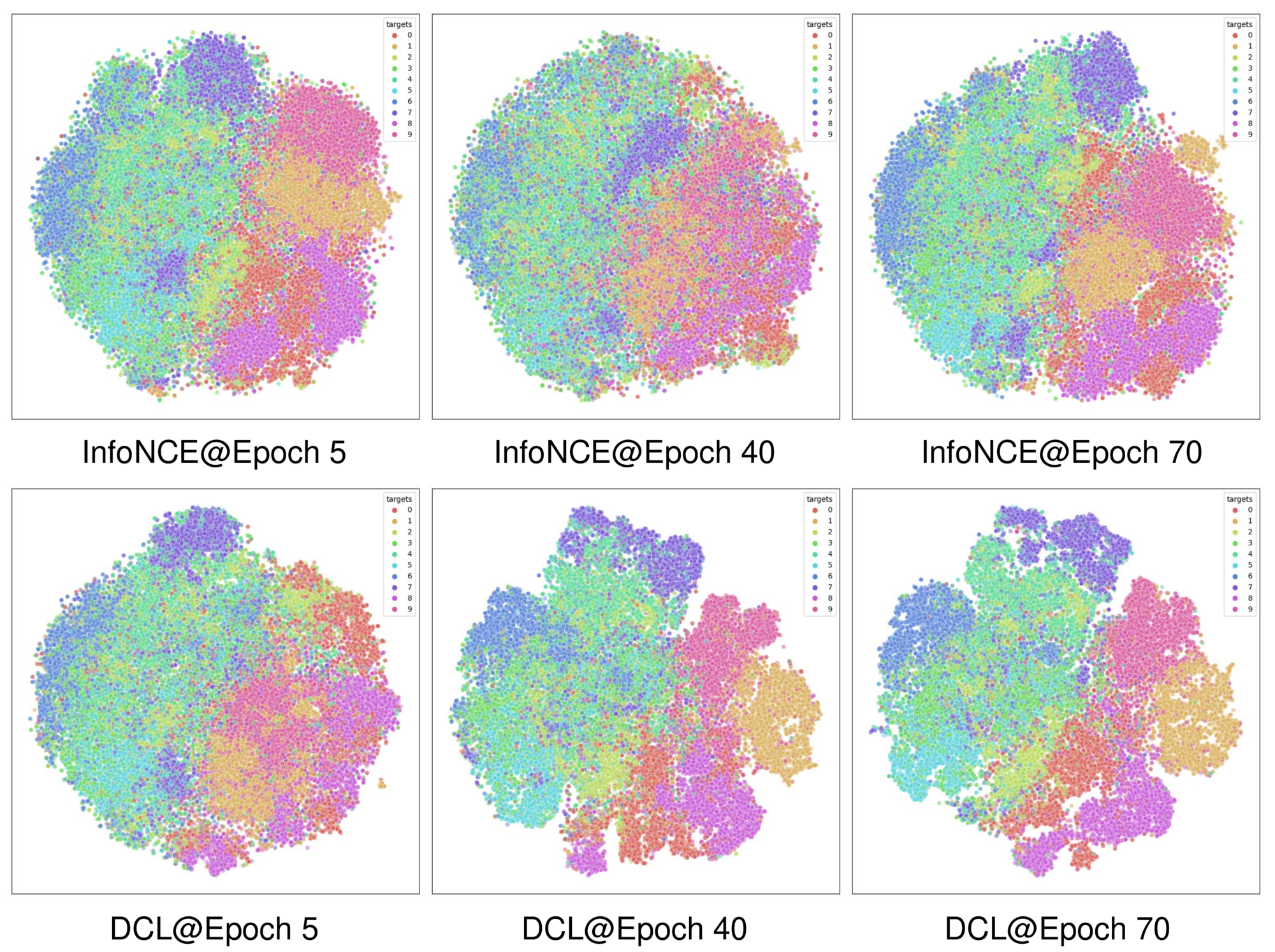

Figure 2. (a) SimCLR framework. (b) The gradient is modulated by the NPC multiplier $q_B$. (c) Two failure cases: easy positives suppress negative gradients (top), and easy negatives suppress positive gradients (bottom).